The Uniform Tire Quality Grading system was introduced in 1979 to give consumers a way to compare tires from different manufacturers using consistent measures. Forty-six years later, almost no one understands what the numbers actually mean, and tire shopping advice on the internet usually treats UTQG ratings as either gospel or as worthless. Both readings are wrong. The ratings are useful within specific limits, they’re misleading when used outside those limits, and the limits are not where most people think they are. Here’s what the three components of a UTQG rating actually measure, and how I use them when I’m helping someone shop.

Key takeaways

- Treadwear ratings are useful for comparing tires within a single manufacturer’s lineup but unreliable across brands

- Traction ratings (AA, A, B, C) measure straight-line wet braking only — they say nothing about cornering grip

- Temperature ratings (A, B, C) measure heat resistance at sustained speed, which matters more than most buyers realize

- “Higher treadwear = longer life” is a rough approximation that breaks down at the extremes

- Summer performance tires almost always have lower treadwear ratings than all-seasons because of compound, not quality

What’s actually on the sidewall

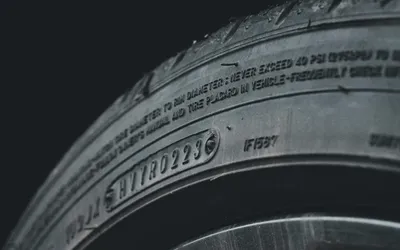

Look at any tire’s sidewall and you’ll find something that reads similar to “TREADWEAR 320 TRACTION AA TEMPERATURE A.” Each of those three pieces is a separate measurement under a separate test, and treating them as a single quality score is the first mistake that leads to bad shopping decisions.

Treadwear is the number — typically anywhere from 60 (track-only tires) to 800+ (long-life touring tires). Traction is a letter from AA (best) to C. Temperature is a letter from A (best) to C. Each one is determined under standardized testing conditions specified by the National Highway Traffic Safety Administration. Manufacturers test their own tires and self-certify, with NHTSA reserving the right to verify or recall ratings if testing reveals discrepancies.

The fact that manufacturers self-certify is the central reason cross-brand comparisons get unreliable, and we’ll get to that.

Treadwear: what it measures, what it doesn’t

The treadwear test is run on a specific course in West Texas using a convoy of vehicles that drive a measured route over thousands of miles. The tested tire is compared against a control tire (a Course Monitoring Tire, or CMT) and given a rating that represents the tire’s wear rate as a multiple of the control tire’s wear rate.

A treadwear rating of 100 means the tire wore at the same rate as the control. A 200 rating means it wore at half the rate. A 600 rating means it wore at one-sixth the rate. The math is straightforward in theory.

In practice, the rating only describes how the specific tested tire behaved on the specific course under the specific conditions. The actual tread life you’ll see depends on your driving style, road surface composition, alignment, rotation discipline, climate, vehicle weight distribution, and several other factors that the test doesn’t account for. A tire rated 500 might give one driver 50,000 miles and another driver 30,000 miles, and both numbers can be defensible.

What the rating is genuinely useful for: comparing tires within a single manufacturer’s lineup. If Michelin offers two summer tires and one is rated 320 and the other is rated 240, the 320 will almost certainly outlast the 240 in normal use, by something close to that ratio. The same applies within Continental, Bridgestone, Goodyear, and others.

What the rating is not useful for: comparing tires across manufacturers. Because manufacturers self-certify and have some latitude in how they conduct the testing, a 400 rating from one manufacturer is not directly comparable to a 400 from another. Investigations going back to the 2000s have documented meaningful differences in how brands rate similar tires. Treating cross-brand treadwear comparisons as authoritative is the most common UTQG mistake.

Traction: a narrow measure, frequently misread

The traction rating tests one thing: a tire’s ability to produce wet braking force on two specific surfaces (asphalt and concrete) under straight-line deceleration. Tests are conducted at 40 mph with the tire fully locked on a wet test surface. The results are translated into a coefficient of friction and bucketed into the AA, A, B, or C ratings.

Notice what this doesn’t measure. It doesn’t measure cornering grip, wet or dry. It doesn’t measure dry braking. It doesn’t measure wet handling at speeds above 40 mph. It doesn’t measure aquaplaning resistance, which is a function of tread design and depth more than compound. A tire can have a AA traction rating and still feel unpredictable when you ask it to corner hard in the rain.

Most modern performance tires achieve A or AA ratings, which means the rating doesn’t differentiate well between competitors at the top of the market. If you’re cross-shopping the Michelin Pilot Sport 5, the Continental ExtremeContact Sport 02, the Goodyear Eagle F1 Asymmetric 6, and the Bridgestone Potenza Sport, all four will carry AA traction. The actual wet handling differences between them are real and substantial, but the UTQG rating won’t tell you about them.

The rating becomes useful at the bottom end. A B or C traction rating on a passenger tire is a meaningful warning sign, especially on a daily-driven car in a region that gets rain. AA versus A is mostly a tiebreaker.

Temperature: the rating most owners ignore

The temperature rating measures a tire’s ability to dissipate heat under sustained speed. Test tires are run at speeds increasing from 100 to 115 mph (or higher for A-rated tires) on a dynamometer for specified durations, with the rating reflecting the tire’s ability to maintain integrity at the higher speeds. A is best, B is acceptable for most use, C is the legal minimum for passenger tires.

This is the rating that matters most for highway driving in summer in hot regions, and the one that most buyers ignore. A B-rated tire isn’t a problem on a 60° spring drive, but the same tire under sustained 80 mph driving on a 100° highway with a fully loaded vehicle is operating closer to its design limits than an A-rated equivalent.

Most modern tires are rated A or B. C ratings on passenger tires are uncommon now. If you live somewhere with sustained hot weather, drive at highway speeds for extended periods, or routinely tow with your tow rig, defaulting to A-rated tires is reasonable insurance.

Where the system breaks down

The UTQG rating system has structural problems that have been criticized for decades.

First, the self-certification element. Manufacturers run their own tests and report their own results, and the wide variance in cross-brand ratings reflects different testing approaches and different incentives. NHTSA has limited resources to verify ratings, and meaningful enforcement action against bad ratings is rare.

Second, the testing windows are narrow. The treadwear course represents one type of road in one climate. The traction test is straight-line wet braking only. The temperature test is one specific high-speed scenario. Real driving covers a much wider envelope than any of these tests measure.

Third, the rating system hasn’t been meaningfully updated since the 1970s, while tire technology has changed substantially. Modern compounds, multi-compound treads, asymmetric tread patterns, and silica-rich rubber technologies all interact with the test methodologies in ways the original system didn’t anticipate.

Fourth, summer performance tires inherently rate lower on treadwear because their compounds are designed for grip at the cost of longevity. A 200 treadwear rating on a max-performance summer tire is normal, not poor — it reflects the design choice. Comparing that 200 to a 700 on a touring all-season is comparing different categories of tire that aren’t trying to do the same thing.

How to actually use UTQG when shopping

The honest framework:

Use treadwear within a single manufacturer’s lineup to compare expected life between similar tires. A 480-rated tire from Continental will almost certainly outlast a 320-rated tire from Continental in similar use.

Use traction at the C and B levels as a warning sign, not as a tiebreaker between AA tires. If you’re cross-shopping high-performance tires, the UTQG traction rating won’t tell you which one handles better.

Use temperature as a real consideration if you drive in hot conditions at highway speeds or carry heavy loads. The A rating buys you margin.

For everything else, rely on independent tire testing — Tire Rack’s surveys, professional reviews from outlets that do back-to-back comparisons, and longitudinal owner data from forums and review sites. Those sources address the questions UTQG was never designed to answer.

Bottom line

UTQG ratings are useful in narrow ways and unreliable as a single quality score. Treadwear comparisons within a brand are trustworthy and across brands are not. Traction ratings flag genuinely poor wet braking but don’t differentiate between strong tires. Temperature ratings matter more than most buyers consider. Combined with independent testing and an honest read of how you actually use your car, UTQG is a useful starting point — but it was never meant to be the whole answer, and treating it that way leads to tire decisions you’ll regret six months later.